Entropy Makes the Collatz Sequence Go Down

Bradley Berg November 21, 2018

Edited June 16, 2023

ABSTRACT

We look at the Collatz Sequence from an information theory

perspective to lay out its underlying computational mechanics.

The mechanisms are similar to those used in pseudo random number

generators and one way hashes.

An individual run is divided into three phases. In the first

phase the influence of the seed runs its course after information

contained in the initial seed is lost. Values are randomized in

the second phase. They follow the statistical model where the

average gain is just over 0.866; causing them to decline. The

third phase begins once a value goes below the seed; providing

that the series is not circular. At this point we know the run

will terminate at one.

An equivalent restatement of the Collatz sequence steps through

alternating chains of even and odd values. This variation

constitutes a pseudo random number generator. The operations

used to scramble values are unbiased which results in an even

distibution of ones and zeros. The entropy of this mechanism is

high so that in the second phase values are fairly randomized.

Notations:

* multiplication operator

^ exponentiation operator

-- logical difference (exclusive or) operator

-> "Transition to" the the next value in a sequence.

This is an informal explanation of the computational mechanics of the Collatz sequence. Similar computations are used in random number generators. Using the following definition Of the sequence the average odds of taking either step are even.

N is Even: N' = N / 2 N is Odd: N' = (3 * N + 1) / 2

The gain of a transition is its Output divided by the Input (N' / N). As N gets larger in the odd transition the "+ 1" term quickly becomes insignificant. To compute the gain for the odd transition in the limit we can safely drop the "+1"term.

Output / Input Gain

N is Even: {N / 2} / N 0.5

N is Odd: {(3 * N + 1) / 2} / N 1.5

The gain of a series of transitions is the product of the gains for each transition. We get the average gain of a sequence by using the probability of taking either an odd or even path.

Gs = 1.5 ^ p(odd) * .5 ^ p(even)

If a sequence produces uniformly randomized values then the chances of using either transitions are 50:50. In this case the choice of path is determined by the low order bit of the input value. This means the low bit would need to be uniformally random over the sequence. The average gain of a uniformly randomized sequence is then:

Average transaction gain = <transaction gain> ^ p(<transaction>)

Average odd gain = 1.5 ^ .5 = 1.22474

Average even gain = 0.5 ^ .5 = 0.70711

Average sequence gain: Ga = 1.22474 * 0.70711 = 0.86603+

The statistical average gain in each step is less than one so on average the sequence declines. However, the gain in a single run for a given Seed can vary significantly. There could potentially still be a series where the the total gain indefinitely exceeds one and never terminates.

Gain = 1.5 ^ p * 0.5 ^ (1 - p) = 1

ln( 1.5 ^ p * 0.5 ^ [1 - p]) = ln( 1 ) = 0

= ln( 1.5 ^ p ) + ln( 0.5 ^ (1 - p)

= p * ln( 1.5 ) + (1 - p) * ln( 0.5 )

p * ln( 1.5 ) = -(1 - p) * ln( 0.5 )

ln( 1.5 ) / ln( 0.5 ) = -(1 - p) / p

ln( 1.5 ) / ln( 0.5 ) = -1 / p + 1

ln( 1.5 ) / ln( 0.5 ) - 1 = -1 / p

p = -1 / {ln( 1.5 ) / ln( 0.5 ) - 1} = 0.63093+

Gain = 1.5^0.63 * 0.5^0.37 = 0.99898 Just under breaking even

Gain = 1.5^0.64 * 0.5^0.36 = 1.01001 Just over breaking even

For a series to run on forever it would have to sustain an average gain over one. To break even odd transitions would need to occur about 64% of the time. They would need to be applied over 1.7 times more than evens; which is substantial. It remains to be shown that the the series does not intrinsically favor odd transitions.

Consecutive iterations of the same kind of transition in a run form a chain. Even chains start with an even seed value that in binary have one or more trailing zeroes. After applying the transitions in an even chain the result simply has the zeros removed.

Odd chains consume an odd input and can have multiple odd intermediate values. Eventually an Odd chain transitions to an even number. The number of consecutive low order one bits determines the chain length. For example, an input of 19 is a binary 10011 so the subsequent chain has two Odd transitions: 19 -> 29 -> 44

A odd chain of length k takes an input of the form: j * 2^k - 1

The output from the chain simplifies to: j * 3^k - 1

N1 = (3 * N + 1) / 2 First transition

= (3 * [j * 2^k - 1] + 1) / 2 Substitute N = j * 2^k - 1

= ([3 * j * 2^k - 3] + 1) / 2

= [3 * j * 2^k - 2] / 2

= 3^1 * j * 2^[k-1] - 1

Ni+1 = (3 * {3^i * j * 2^[k-i] - 1} + 1) / 2 Subsequent transitions

= ({9 * j * 2^[k-i] - 3} + 1) / 2

= {9 * j * 2^[k-i] - 2} / 2

= 3^[i+1] * j * 2^[k-i-1] - 1

Nk = 3^k * j - 1 Odd chain output

A Collatz sequence will have segments with consecutive runs of even and odd chains. If these chains have length k then the outputs have the form:

Chain Input Chain Output Chain Gain

N is Even: j * 2^k j 0.5^k

N is Odd: j * 2^k - 1 j * 3^k - 1 1.5^k

Note that for even inputs j is the input shifted right by the number of its trailing zeros. For odd inputs j is 1 plus the input shifted right by the number of trailing ones. For reference, here are the first few chains for the series beginning with a seed of 27.

Original Collatz Definition Even Odd j k

27 -> 41 -> 124 -> 62 27 -> 62 7 2

-> 31 -> 31 31 1

-> 47 -> 71 -> 107 -> 161 -> 242 -> 242 1 5

-> 121 -> 121 121 1

-> 364 -> 182 -> 182 61 1

-> 91 -> 91 91 1

-> 137 -> 206 -> 206 23 2

-> 103 -> 103 103 1

-> 155 -> 233 -> 350 -> 350 13 3

-> 175 -> 175 175 1

-> 263 -> 395 -> 593 -> 890 -> 890 11 4

Each series alternates between even and odd chains. We can represent this aspect algebraically by merging both into a single step. This gives us a series defined as a single transition. Since all intermediate values using the combined definition are even, an initial odd value needs to first transition one step using the "3 * n + 1" rule to get the first even number.

Every input has the binary form: j ones zeros

N = ([j + 1] * 2^ko - 1) * 2^kz --> [j + 1] * 3^ko - 1

where: kz - number of trailing zeros in N

ko - number of the next higher set of ones in N

j - N shifted right by kz + ko bits: N / 2^[kz + ko]

Using the previous example, initially we transition 27 to 82. From there the next few steps are:

j ko kz binary

82 -> 41 -> 124 -> 62 20 1 2 101_0010

-> 31 -> 484 -> 242 0 5 1 11_1110

-> 121 -> 364 -> 182 60 1 1 1111_0010

-> 91 -> 274 -> 206 22 2 1 1011_0110

-> 103 -> 310 -> 466 12 3 1 1100_1110

-> 233 -> 700 -> 350 116 1 1 1_1101_0010

-> 593 -> 1780 -> 890 10 4 1 1_0101_1110

Statistical averages only hold when the odds are fair. In this section we show why the dice are not loaded. Shannon entropy is a measure of information denoting the level of uncertainty about the possible outcomes of a random variable.

H = -p0 * log2( p0 ) + -p1 * log2( p1 )

where: p0 is the probability a bit is zero.

p1 is the probability a bit is one (1 - p0).

A set of coin tosses has p0 = p1 =.5 so its entropy is 1, totally random. When looking at the entropy of bits in a number then p0 is the percentage of zero bits. For the binary number, 1010_1111, p0 is .25 (H = 0.811). Strings of all ones or zeros have no entropy (H = 0). In these examples we are measuring the bits in a number horizontally.

Bits in a series of numbers have two dimensions - horizontal bits in each individual value and vertical bits over the duration of the series. We can also measure entropy vertically over a number series. That means we can observe at a select bit in each value as the series progresses.

For Collatz the low order bit is of interest because it determines if a number is odd or even. In turn that determines which transition to take. When the entropy of the low order bit is high then on average there will be nearly as many even transitions as odds.

Each kind of chain takes a value where the low order bits are a string of zeros or ones and either removes or replaces them. Even inputs remove low order zeros. The expression for odd chain inputs has a 2^k term that transitions to 3^k.

Strings of zeros or ones have no entropy and the 3^k term has positive entropy. This adds entropy each time a chain of transitions is applied. Removing the low order zeros that have no entropy only increases the entropy of the result. Entropy is also increased by removing the repeated ones and additionally replacing them with scrambled bits. The upper bits, j, are scrambled by multiplying j by a power of 3. This uniformly randomizes the choice of transition to apply next.

The seed can be contrived to produce a series of any desired length. The longer the series the larger the seed has to be. It has to contain enough information to influence the desired outcome. Initially, as the series progresses the information contained in the seed is lost. When there are two ways to reach the same number in a sequence we lose the information about which path was taken.

Odd numbers always transition (3 * n + 1) to even numbers so an odd value cannot be reached from another odd. You can reach certain even numbers from either an odd or even transition. For example, an output of 16 can be reached from either 32 or 5.

32 -> 32 / 2 = 16 5 -> 3 * 5 + 1 = 16

Whenever transitioning to an even value such that (even - 1) % 3 = 0 then the previous value could have been either:

2 * even or (even - 1) / 3

For Collatz, a bit of information contained in the seed is lost each time one of these select even numbers is reached. After all bits in the seed are scrubbed the initial phase is complete. Any attempt to contrive a seed to skew results can only directly affect values during this phase.

One way hash functions use this concept of lost information. Secure hashes have very many ways to reach each hash value. This is how passwords are encoded so they can be authenticated. In this sense Collatz is a trivial example of a one way hash.

This next phase is key as this is where the sequence runs below the Seed. The sequence is rewritten as a pseudo random number generator. Uniformly randomized values eventually trend towards their average. In turn this drives transitions towards their average gain. In the introduction we showed that Collatz has an average gain of 0.86603; eventually driving the series below the seed value.

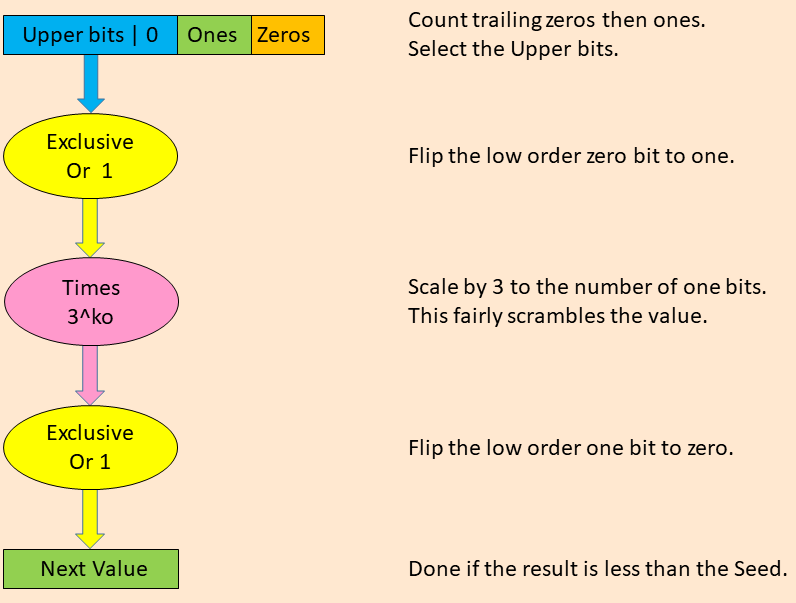

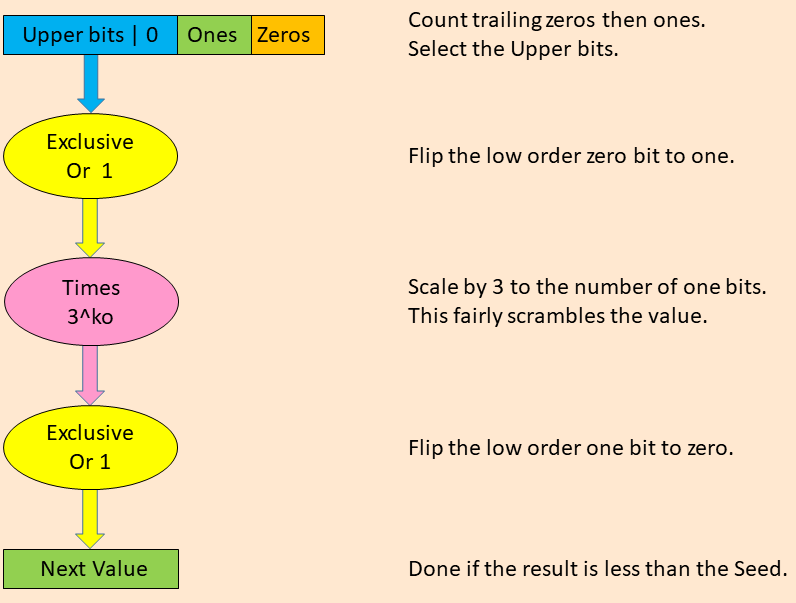

Next we'll be using the the combined series from section 1.2 for each randomization step. The value, j, is always even so the [j + 1] term will simply set the low order bit with no carry. Also the product will have an odd result so that decrementing by 1 will just clear the low order bit.

Input: ([j + 1] * 2^ko - 1) * 2^kz Result: [j + 1] * 3^ko - 1 = [j -- 1] * 3^ko -- 1

A pseudo random number generator repeatedly applies a function to produce a series of values. For it to produce uniformly random numbers, operators cannot be biased towards producing either more ones or zeros. In a uniform sequence the entropy will be one. If it is not uniform the bias will show up in the operators.

If you split a random number into two parts, each part will still be random. Using the upper bits from the input still gives random values. However, the way the value is split the selected upper value will be even. The low order bit is zero and is not part of the randomized portion.

The first Xor sets the low bit of the selected region. This is balanced out by clearing it in the final step with another Xor.

The product of a random variable by a constant is also random, but with a larger gap between them. Multiplying random numbers from 1 to 10 by 3 yields random numbers from 3 to 33. They simply have a gap of 3 between them instead of 1.

The product used to scramble values can be broken down into repeated additions. The following table shows all combinations for the three inputs (A, B, Carry In) and the two outputs (Sum, Carry Out). It also shows changes (xor) between the sum and inputs A and B.

Carry Carry A xor B xor

A B In Sum Out Sum Sum

0 0 0 | 0 0 | 0 0

0 0 1 | 1 0 | 1 1

0 1 0 | 1 0 | 1 0

0 1 1 | 0 1 | 0 1

1 0 0 | 1 0 | 0 1

1 0 1 | 0 1 | 1 0

1 1 0 | 0 1 | 1 1

1 1 1 | 1 1 | 0 0

Input bits A and B are vertically aligned and are altered by addition. Carries are applied horizontally and propagate to higher order bits. This way bits in both directions become scrambled.

Note that all the columns in the table are different. This shows how bits are scrambled to produce randomized results. Also note that all columns contain 4 zeros and 4 ones. This balance produces results that are unbiased towards either zero or one bits. The end result is a series of uniformly distributed pseudo random numbers.

Outputs in any individual series depend on the values kz and ko. The more random they are then the more random the series. The k values measure the width of a horizontal subset of bits in each value. Pseudo random number generators that conflate operations on horizontal and vertical sets of bits rely on the independence of these orthogonal values.

The repeated zeros and then ones in the low order bits that have low entropy are continuously removed and replaced with scrambled bits. This creates a self regulating system that continuosly randomizes the lower bits. Those bits control the selection operation in the next round.

When runs have uniformly random values then going back to the original Collatz sequence definition, the average number of even and odd transitions will be the same. In turn this causes the run to decrease since the average gain is less than one.

If the sequence was not uniformly random then we would see bias in the arithmetic used in transitions. When the result of each of these steps is unbiased the series acts as a random number generator.

Examples where seeds produce long runs will have highly skewed values in the beginning, but that cannot be sustained. As values become more randomized as the series progresses the will trend toward average results. Even if you get lucky and call the results of several coin tosses, your luck will run out in the long run.

The previous randomization phase leaves us with a value of N that is below the seed. It is well understood that once this happens we know the series will eventually terminate at one. Firstly, we know all values below some arbitrary small number M (say 10) transition to one.

Next, starting with the next higher seed, M + 1, we transition until it reaches M or less, Since we already know seeds of M or less will reach one, by induction, a series that goes below its seed will reach one.

During this final phase the law of small numbers takes over and the series will likely behave less randomly. Once one is reached all information about previous values that led up to it is gone. The final value is a 1 which has no entropy.

Example runs do not carry weight because we know in advance they all will work. They are simply a sanity check and gives you a feel for how things work. Entropy measures are more useful in longer sample runs. You can expect the first few values to have higher entropy. However, if an infinite run could be constructed the entropy would need to be sustained well below one.

Individual runs will typically have some jitter. There may be less jitter on very long runs, but they are hard to come by. Long runs need to be biased to one early on in order to grow. Examples of long runs will tend to exhibit values with a surplus of one bits. Short runs where evens dominate won't even make it to the randomization phase.

For an infinite run we've previously shown the there needs to be 64% or more ones. This gives and entropy of:

H = -.36 * log2( .36 ) + -.64 * log2( .64 ) = 0.94268

At first glance that may seem high as the ratio of ones to zeros is over 1.7. Randomized traces are typically show entropy over 0.99; exceeding the 0.94268 minimal requirement.

This trace shows the entropy after every fifth step in the randomization phase. The entropy metric covers bits in values up though each step. At the point where samples are taken they are always even so the low order bit is discarded. The bitwise width of values also varies. Upper bits are truncated so that all samples are below the seed.

Seed = 4_50449_75045_09599 = #10_00d1_0da5_de9f

Iteration Entropy Ones Zeros

5 0.99483 141 119

10 0.99277 286 234

15 0.99384 426 354

20 0.99506 563 477

25 0.99836 681 619

30 0.99820 819 741

35 0.99736 965 855

40 0.99691 1108 972

45 0.99756 1238 1102

50 0.99808 1367 1233

55 0.99780 1509 1351

60 0.99749 1652 1468

65 0.99772 1785 1595

70 0.99835 1907 1733

75 0.99829 2045 1855

80 0.99840 2178 1982

85 0.99864 2306 2114

90 0.99849 2447 2233

95 0.99891 2566 2374

100 0.99917 2688 2512

105 0.99941 2808 2652

110 0.99945 2939 2781

111 0.99949 2963 2809

To see how the sequence performs in general we ran over a million consecutive even numbers through a single iteration. This next chart shows the cumulative entropy of the resulting values.

Iteration Entropy Ones Zeros 50_000 0.99350 475_206 574_794 100_000 0.99721 984_678 1_115_322 150_000 0.99886 1_512_405 1_637_595 200_000 0.99966 2_054_339 2_145_661 250_000 0.99984 2_585_879 2_664_121 300_000 0.99970 3_086_254 3_213_746 350_000 0.99987 3_625_941 3_724_059 400_000 0.99970 4_113_665 4_286_335 450_000 0.99976 4_638_681 4_811_319 500_000 0.99983 5_168_585 5_331_415 550_000 0.99991 5_711_156 5_838_844 600_000 0.99996 6_255_089 6_344_911 650_000 1.00000 6_816_718 6_833_282 700_000 0.99994 7_415_041 7_284_959 750_000 1.00000 7_882_886 7_867_114 800_000 1.00000 8_392_173 8_407_827 850_000 1.00000 8_925_576 8_924_424 900_000 1.00000 9_428_185 9_471_815 950_000 0.99999 9_944_542 10_005_458 1_000_000 0.99999 10_466_775 10_533_225 1_048_576 1.00000 11_012_891 11_007_205

For this next chart the sequence is contorted by forcing some bits in the result to one. This shows how skewed the operations have to be in order for the entropy to drop to a level (0.94268) where it would increase. 11 out of 20 bits needed to be forced to one to sufficiently skew the result.

Value Of Final Bits Set Entropy Ones Zeros 0 1.00000 11_012_891 11_007_205 #10_000 0.99995 11_102_306 10_917_790 #11_000 0.99971 11_229_280 10_790_816 #11_100 0.99931 11_350_975 10_669_121 #11_500 0.99916 11_385_687 10_634_409 #15_500 0.99892 11_435_329 10_584_767 #55_500 0.99703 11_716_482 10_303_614 #155_500 0.99727 11_687_638 10_332_458 #175_500 0.98541 12_573_465 9_446_631 #177_500 0.96792 13_323_124 8_696_972 #177_700 0.95400 13_775_529 8_244_567 #177_740 0.94266 14_093_550 7_926_546

The Collatz sequence integrates several mechanisms commonly used to create pseudo random number generators.

Any individual series is partitioned into three phases. In the initial phase the seed value can influence the outcome to produce arbitrarily long runs. After that the series generates randomized values until it goes below the seed value. From there it is guaranteed to reduce to one unless the series is circular.

A uniformly randomized series eventually moves towards a statistically average gain. For a Collatz series to sustain an average gain above one would require over 1.7 times more odd transitions than even. This is well above parity. Randomization forces the series to average out and decrease until it inevitably goes below the seed. Once it does that we know it will terminate.

The random behavior of the Collatz sequence makes it impossible to prove algebraically. The irony is that this randomness is the force that leads to convergence.